A few weeks ago, U.S. Attorney General William Barr joined his counterparts from the U.K. and Australia to publish an open letter addressed to Facebook. The Barr letter represents the latest salvo …

A few weeks ago, U.S. Attorney General William Barr joined his counterparts from the U.K. and Australia to publish an open letter addressed to Facebook. The Barr letter represents the latest salvo …

Source:

Can end-to-end encrypted systems detect child sexual abuse imagery?

I’ve

argued that FAANG companies can continuously scan their networks for garbage, but won’t because it’s expensive. Meanwhile, they’re sitting on figurative mountains of cash. A well-respected cryptologist dove into the details of whether or not you could do automatic image recognition in the face of end-to-end cryptology. While that’s an intriguing complication that I’m glad to see people working out, I don’t care about that part. What I do care about is how expensive of a computer process it is. He summarizes thus:

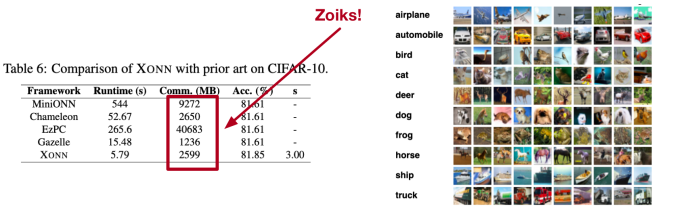

Despite the relatively small size of these problem instances, the overhead of using MPC turns out to be pretty spectacular. Each classification requires several seconds to minutes of actual computation time on a reasonably powerful machine — not a trivial cost, when you consider how many images most providers transmit every second.

So, yes, this can be done. Heck, Facebook does facial recognition all the time on uploaded phots, and suggests peoples’ names to you to check their work, and this is just a subset of the larger problem. Admittedly, the memory sizes to convolute the image into a digital fingerprint surprises me, but, again, it’s simply not beyond the wit of man to

do it; it’s a lack of will to

spend the money. So they continue to use a small army of meat-space computers to do this, subjecting them to the absolute utter depravity of man, and catching only a

fraction of the illegal filth.

A few weeks ago, U.S. Attorney General William Barr joined his counterparts from the U.K. and Australia to publish an open letter addressed to Facebook. The Barr letter represents the latest salvo …